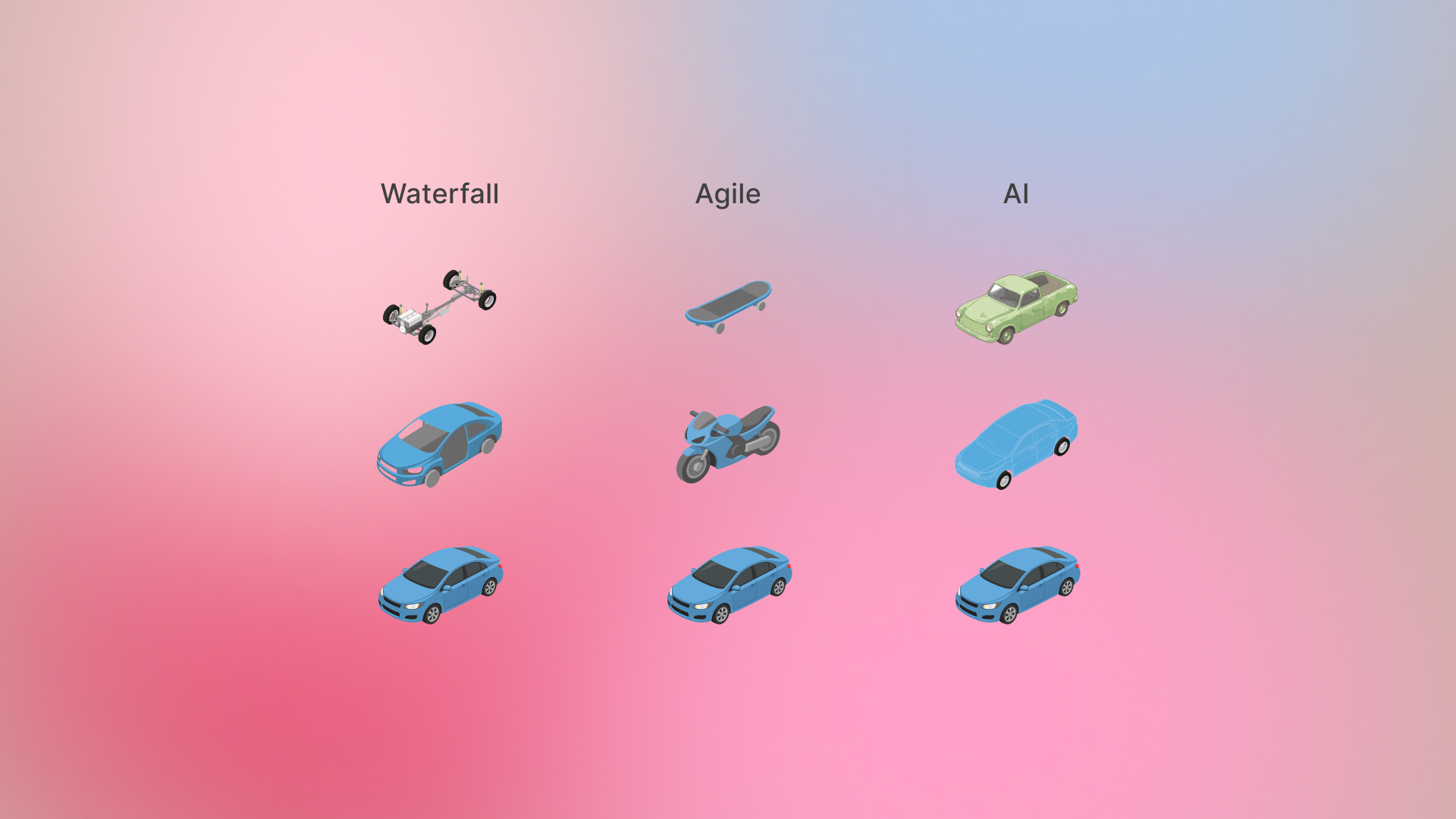

Here is a familiar illustration that has quietly shaped how an entire generation of product teams think about building. It compares Waterfall and Agile using the example of a car. In Waterfall, the product is delivered in parts: a wheel, then an axle, then a chassis. But none of which are useful on their own. Agile corrects this by ensuring that every stage delivers something usable: a skateboard, then a scooter, then a bicycle, eventually becoming a car.

For years, this image has been effective because it captures a fundamental truth. Users do not care about your roadmap; they care about whether what you are giving them works. Agile made that idea operational. It forced teams to stop thinking in terms of internal milestones and start thinking in terms of user value.

But the reason this model worked so well is also the reason it begins to fail in the context of AI.

Agile assumes that, at some level, you know what you are building. You may not know every feature or every edge case, but you understand the nature of the end state. You know what a car is. The work is about breaking it down into smaller, usable steps without losing coherence. Each intermediate stage is not random; it is a reduced but valid version of the final product.

When teams begin working with AI systems, especially generative ones, this assumption starts to erode. The difficulty is not in breaking down the problem. The difficulty is that the problem itself is often not fully formed. The end state exists more as a feeling than a specification. Teams are trying to arrive at something they cannot completely describe in advance.

This changes the nature of iteration in a way that is easy to underestimate.

In a traditional Agile environment, iteration is a process of increasing completeness. You build a smaller version, you validate it, and then you extend it. Progress is visible and measurable because each step brings you closer to a clearly understood outcome. Even when you pivot, you are still operating within a defined space.

With AI, iteration is less about completeness and more about alignment. The first output is rarely a “smaller version” of what you want. It is often something that looks structurally correct but feels wrong in ways that are difficult to articulate. You refine it, and it improves in one dimension while regressing in another. You adjust again, and it gets closer, but something still does not sit right.

What you are doing in that process is not building in parts. You are navigating a space of possibilities, trying to converge on something that matches an internal sense of what “good” should be.

This is where many teams experience friction without fully understanding why. They apply the same mental models that worked in Agile clear breakdowns, defined acceptance criteria, predictable iteration cycles and find that progress feels inconsistent. Outputs vary. Improvements are uneven. The path is not linear.

The underlying issue is that AI systems do not behave like deterministic systems. In traditional software, behavior is explicitly defined. Given the same input, the system produces the same output. Iteration improves the system by making it more complete and more reliable.

AI systems, by contrast, are probabilistic. They produce responses based on patterns, not fixed rules. Two iterations with slightly different inputs can lead to significantly different outputs. The role of the team shifts from implementing logic to guiding behavior.

This introduces a different kind of work, one that is less about execution and more about evaluation.

In this environment, the most critical skill is not the ability to break down tasks efficiently. It is the ability to recognize when something is almost right, and to understand what is missing. This is not something that can always be captured in a requirement document. It is perceptual. It relies on experience, exposure, and a developed sense of quality.

This is where the idea of “taste” becomes relevant, not in the superficial sense of personal preference, but as a form of judgment. Over time, teams and individuals develop an internal benchmark for what good looks like. They can see misalignment even when they cannot immediately explain it. They know when to keep pushing and when something is truly finished.

In Agile environments, discipline was often the differentiator. Teams that followed the process well, maintained clarity, and executed consistently tended to succeed. The system was designed to reduce dependence on individual intuition and make outcomes more predictable.

In AI-driven environments, that balance shifts. Process still matters, but it is no longer the primary source of advantage. Two teams can use the same models, the same tools, and similar workflows, yet produce very different outcomes. The difference lies in how they evaluate and refine what the system produces.

Significant implications on how we think about design

Design is no longer confined to structuring interfaces or defining user flows. It extends into shaping how the system behaves, how it responds under uncertainty, and how it evolves over time. Designers are increasingly involved in defining not just what the user sees, but how the system interprets and acts.

In many ways, this is closer to directing than building. You are not assembling components into a fixed structure; you are guiding a system towards a desired behavior.

The original Waterfall vs Agile illustration was powerful because it simplified a complex shift into something immediately understandable. But like all simplifications, it has limits. It assumes that progress can be represented as a sequence of increasingly complete artifacts, each resembling the final product more closely than the last.

AI does not follow that pattern. Progress is less about resemblance and more about convergence. Intermediate outputs may not look like earlier or later ones in any obvious way. What matters is whether they are moving closer to the intended experience, even if that intention is still being formed.

Ultimately, the shift to AI is not just about new tools or new technologies. It is about a different relationship with the act of building. We are moving from a world where we construct systems based on clearly defined specifications to one where we arrive at those specifications through interaction with the system itself.

In that world, knowing how to build is no longer enough. The harder, more important question is whether you know what you are trying to build in the first place and whether you can recognize it when you finally see it.